Understanding the Generative AI Ecosystem: From LLMs to Agentic AI

Generative Artificial Intelligence (Gen AI) has rapidly evolved from an experimental research field into a foundational technology shaping modern applications, from intelligent assistants to autonomous decision-making systems. At its core, Gen AI builds on well-established concepts in artificial intelligence (AI) and machine learning (ML), yet introduces new paradigms and tools that enable machines not only to understand data but also to generate content, reason over context, and take actions.

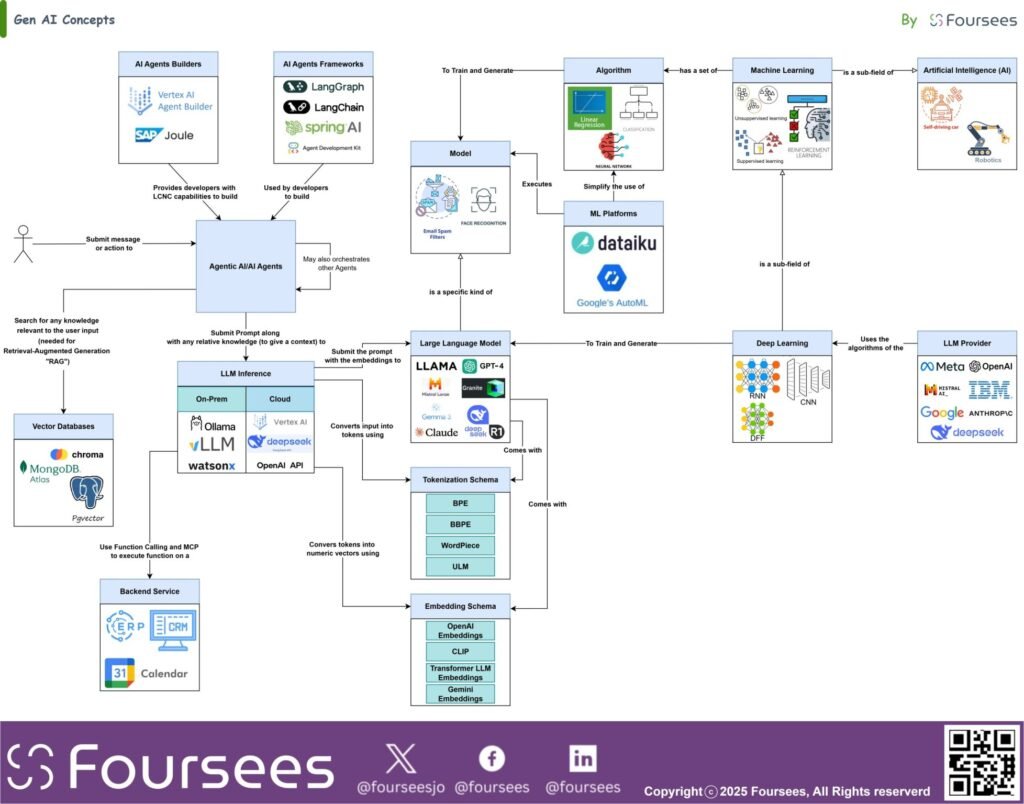

To understand the Gen AI landscape, it is important to see it not as a single model or tool, but as an ecosystem of interconnected concepts. These range from foundational disciplines such as AI, machine learning, and deep learning to specialized model families such as large language models (LLMs), and further to enabling mechanisms such as tokenization, embeddings, inference, Retrieval-Augmented Generation (RAG), function calling, and AI agents. Together, these components form the architecture of modern intelligent systems.

From Artificial Intelligence to Generative AI

At the highest level, Artificial Intelligence is the broad field concerned with creating systems capable of performing tasks that typically require human intelligence. This includes perception, reasoning, pattern recognition, prediction, decision-making, and autonomous behavior.

Within AI, Machine Learning is a subfield that enables systems to learn from data rather than relying solely on explicit rules. Instead of being programmed for every scenario, machine learning models infer patterns from historical data and use them to make predictions or classifications.

A more advanced branch of machine learning is Deep Learning, which uses multi-layered neural networks to learn highly complex patterns from large datasets. Deep learning has enabled many recent AI breakthroughs, particularly in image recognition, speech processing, and natural language understanding.

Generative AI emerges on top of this progression. While traditional ML often focuses on classification, prediction, or optimization, Gen AI focuses on generation. It enables machines to produce text, code, images, audio, and other content in ways that are context-aware and increasingly human-like.

The Role of Algorithms, Models, and Platforms

The conceptual foundation of AI systems starts with algorithms. These are the mathematical and computational methods used to learn patterns from data or perform tasks such as regression, classification, clustering, and neural computation. Machine learning uses collections of such algorithms, while deep learning depends heavily on neural network-based approaches.

When these algorithms are trained on data, they produce models. A model is the trained artifact that can execute a task, such as recognizing faces, filtering spam, generating text, or answering questions. In other words, the algorithm defines the learning method, while the model is the trained result of that process.

Organizations often rely on ML platforms to simplify the process of building, training, tuning, and deploying these models. Such platforms accelerate experimentation and productionization by abstracting much of the low-level operational complexity.

Large Language Models as a Specialized Class of Deep Learning Models

A Large Language Model (LLM) is a specialized kind of deep learning model designed to process, understand, and generate human language. LLMs are trained on vast amounts of textual data and learn statistical and semantic relationships among words, phrases, and concepts. This allows them to answer questions, summarize text, generate content, translate languages, assist with coding, and support conversational experiences.

LLMs have become the centerpiece of many Gen AI solutions because they combine language understanding with language generation at scale. However, they do not operate in isolation. Their capabilities depend on several supporting computational mechanisms.

Tokenization: Turning Language into Processable Units

Before an LLM can process text, the input must be tokenized. Tokenization is the process of breaking text into smaller units called tokens. These tokens may represent words, subwords, characters, or other language fragments, depending on the tokenization scheme used.

This step is essential because language models do not directly process raw sentences as humans see them. Instead, they operate on tokenized representations. The quality and structure of tokenization affect context handling, vocabulary coverage, efficiency, and downstream performance.

Embeddings: Converting Meaning into Numeric Representation

Once text is tokenized, it can be transformed into embeddings. Embeddings are numeric vector representations that capture semantic meaning. They allow words, sentences, and documents with similar meanings to be positioned close to one another in vector space.

Embeddings are fundamental to modern Gen AI systems because they enable semantic search, similarity matching, contextual retrieval, clustering, and knowledge grounding. They form the bridge between natural language and computational understanding.

LLM Inference: The Runtime Execution of Intelligence

After a model is trained, it must be used in runtime to process prompts and generate outputs. This stage is known as LLM inference. Inference is the act of sending a prompt to the model, having it interpret the context, apply its learned parameters, and produce a response.

Inference can take place in different environments, including cloud-hosted platforms, managed APIs, and on-premise runtimes. It is the operational layer through which users and applications actually consume LLM capabilities.

Retrieval-Augmented Generation and Vector Databases

One limitation of standalone LLMs is that they rely primarily on what was learned during training and the prompt provided at runtime. To improve factual grounding and relevance, many Gen AI solutions use Retrieval-Augmented Generation (RAG).

RAG enhances generation by retrieving relevant external knowledge and injecting it into the prompt context before inference. This allows the model to respond using fresh, organization- or domain-specific information rather than relying solely on static pretraining.

This retrieval process is often powered by vector databases, which store embeddings of documents, records, or knowledge assets. When a user submits a query, the system converts the query into an embedding and searches for semantically similar vectors. The retrieved results are then passed to the LLM as contextual input.

This is a major step in moving from generic language generation to context-aware, enterprise-ready intelligence.

Function Calling and Integration with External Systems

Modern Gen AI systems are no longer limited to generating text. Through function calling, an LLM can identify when a task requires an external action and invoke predefined tools or services. These may include checking a calendar, querying an ERP system, retrieving CRM data, sending a message, or performing calculations.

This capability turns the LLM from a passive responder into an active orchestrator capable of interacting with real systems. When combined with APIs, backend services, and enterprise applications, function calling makes Gen AI operationally useful in business processes.

AI Agents and Agentic AI

The next stage in the evolution of Gen AI is the emergence of AI agents and Agentic AI. An AI agent is more than a single prompt-response model. It is a goal-driven system that can reason, plan, decide on next steps, use tools, retrieve information, and sometimes coordinate with other agents to complete tasks.

Agentic AI refers to the broader pattern of designing systems in which AI acts with a degree of autonomy to pursue objectives through multi-step workflows. Rather than merely answering a question, an agent can break down a task, gather needed context, invoke tools, refine its plan, and deliver a more complete outcome.

This shift is significant because it transforms Gen AI from content generation into action-oriented intelligence.

Frameworks and Builders for Agentic Systems

As agent-based systems grow more sophisticated, specialized frameworks and agent builders help developers design, orchestrate, and deploy them. These tools provide patterns for memory, tool use, workflow control, multi-agent coordination, and state management.

Some focus on low-code or no-code construction, while others target developers who need programmable orchestration. Together, they are accelerating the development of enterprise-grade agentic solutions.

MCP and Multi-Agent Interoperability

As multiple agents, tools, and models interact, interoperability becomes critical. This is where coordination approaches such as the Model Communication Protocol (MCP) become important. MCP helps standardize how models and agents communicate with tools, services, and one another.

In increasingly distributed Gen AI environments, such protocols support consistency, composability, and controlled interaction across heterogeneous components. This is especially important for multi-agent systems where different agents may specialize in reasoning, retrieval, planning, or execution.

A Connected Ecosystem, Not Isolated Components

The conceptual diagram highlights an important reality: Gen AI is not a standalone model, but a connected ecosystem.

At the base are AI, machine learning, deep learning, algorithms, and models. On top of these sit large language models as specialized deep learning systems. Supporting them are tokenization, embeddings, and inference runtimes. Extending their usefulness are RAG, vector databases, and function calling. Coordinating these capabilities are agents, agent frameworks, and communication protocols such as MCP. Finally, backend systems and enterprise platforms provide the operational environment in which these intelligent capabilities create business value.

Understanding these relationships is essential for anyone designing, evaluating, or governing Gen AI solutions. It helps distinguish hype from architecture and experimentation from scalable design.

Final Thought

Generative AI is reshaping the technology landscape not because of a single model or tool, but because multiple concepts now work together as a coherent ecosystem. From foundational AI disciplines to advanced language models, from embeddings and retrieval to agents and orchestration, each component plays a specific role in enabling intelligent, adaptive, and context-aware systems.

The real value of Gen AI lies not only in generating outputs but in connecting models, data, reasoning, and actions into solutions that can assist, augment, and increasingly collaborate with humans in meaningful ways.

Ready to apply these insights?

Our architects are ready to help you design the path forward.

Book a Consultation